Setting an Automotive Aerodynamics Benchmark

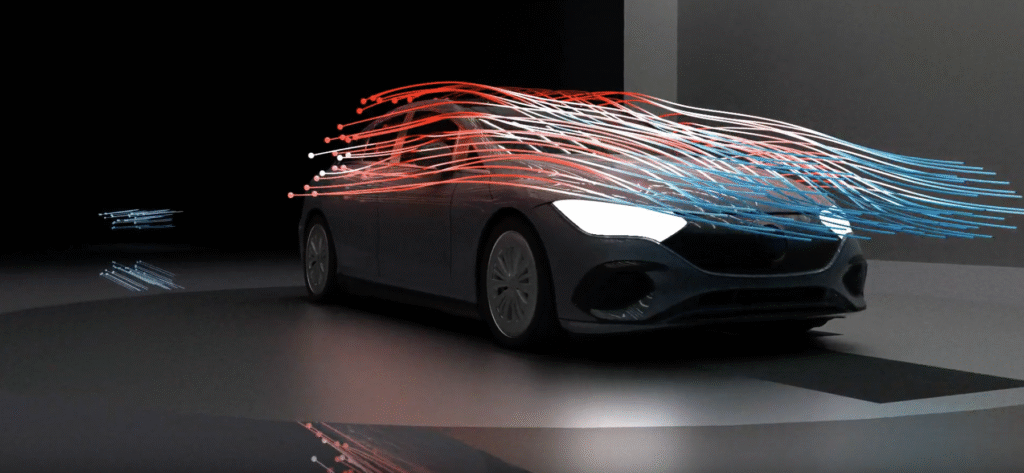

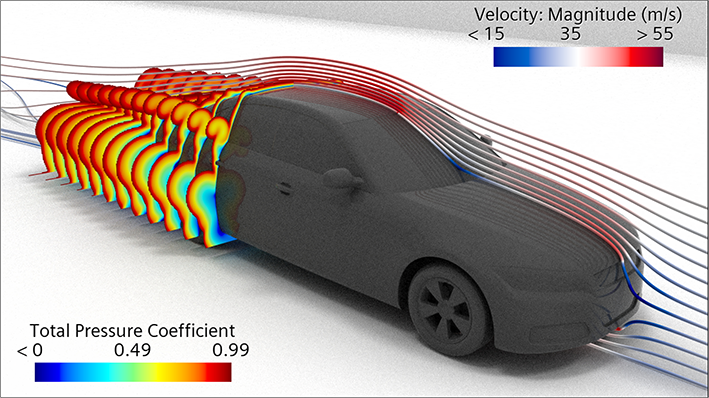

BMW sets the benchmark in automotive aerodynamics, pushing for extreme precision by utilizing scale-resolving transient simulations that incorporate rigid body motion for the wheels. By simulating the real-world rotation of the rims, the brand captures the complex effects of tire wake on overall vehicle performance.

”These simulations are designed to meticulously capture the complex airflow interactions between rotating tires and the vehicle’s overall aerodynamics,” explained NVIDIA’s Ian Pegler during the 2025 GTC presentation.

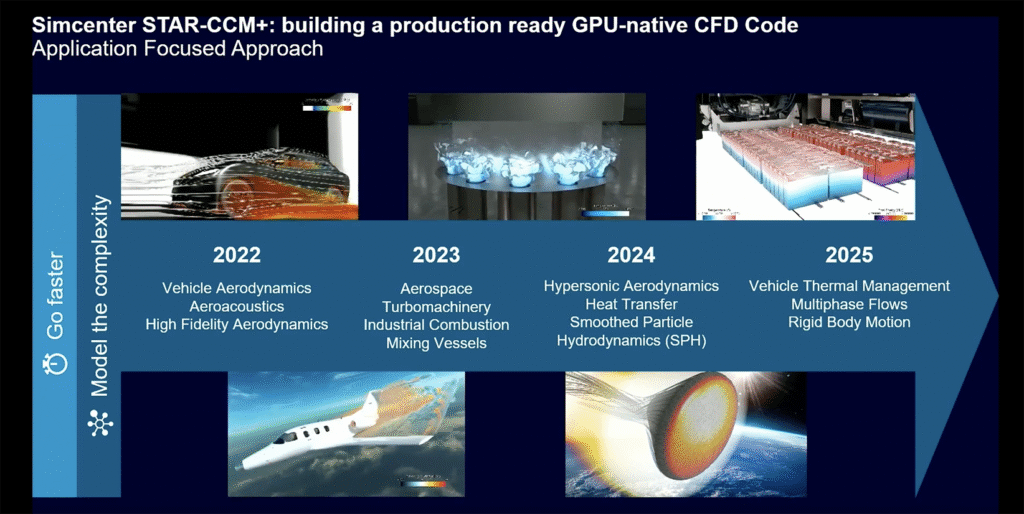

Siemens simulation landscape shifted dramatically in 2022 when this globally leading PLM player unveiled native server GPU acceleration for Simcenter STAR-CCM+, turning the page on traditional CPU-bound workflows. Partnering closely with NVIDIA to utilize CUDA, Siemens enabled critical solvers—including coupled and segregated approaches for complex fluid dynamics—to run directly on GPUs.

The performance leap is transformative: a single server node equipped with eight NVIDIA H100/H200 GPUs can deliver over 30x the speed of a standard CPU-only setup. According to NVIDIA, this means an 8-GPU node can replace more than 50 CPU-only nodes while providing equivalent or even faster turnaround times. This shift allows engineers to tackle massive, complex simulations in a fraction of the time, making high-performance simulation more cost-effective and accessible.

While modeling the precise movement of the rims adds a crucial layer of fidelity, it traditionally demands significant computational resources. ”Moving this workload to the GPU enables us to maintain that high level of accuracy while drastically accelerating the simulation speed,” says Pegler. ”Bottom line: BMW’s results firmly demonstrate that GPU-driven workflows are the next-generation solution for Computational Fluid Dynamics (CFD).”

”However, this isn’t exclusively a BMW phenomenon,” he added. ”We are seeing a widespread industry shift toward GPUs. While the conversation around GPU acceleration for CFD has been circulating for years, I would argue that only within the last two to three years have we truly seen widespread adoption in practical, real-world applications.”

What are the Reasons for this Development?

”One is I think a lot of the codes like Star Citizen Plus have now moved the Solve completely onto the GPU. Previously there was pieces still on the CPU, whereas the majority of the Solve is now running on the GPU, which is where you get these very high speed ups, which makes a price performance sense.”

Codes or tools often referred to in the context of ”Star Citizen Plus” or similar enhancement packages generally act as performance tuning mechanisms designed to shift the load between the CPU and GPU. In a CPU/GPU context, these tools aim to solve Star Citizen’s notorious CPU-bound bottlenecks by offloading work to the GPU or managing system resources better.

”Another important reason is that obviously the GPU technology has evolved a lot, particularly starting from A100, when we had the 80 gigs of GPU memory allowed these kind of industrial scale problems to be run on a GPU much more efficiently. The increase in memory and memory bandwidth, especially with H200 onwards, the 141 gigs of memory, 2.8 terabytes of memory bandwidth has really made GPU, and obviously B200 following it, a very good option, because

the size of model you can run with that much memory is so much higher these days. Often these types of CFD simulation are memory bandwidth bound, so more memory bandwidth often directly correlates to the speed. Which is we want more memory and more memory bandwidth. With more memory bandwidth things goes faster, and we can run bigger models on the same GPU.”

The Power of Siemens’ and NVIDIA’s Simulation Solutions

It is clear that the combination of NVIDIA’s GPU power and Siemens Simcenter simulation solutions has created an interesting market position.

NVIDIA’s GPU technology has helped the German PLM world leader with, among other things:

- Significant Speedups in Simulation (CFD): Utilizing the NVIDIA CUDA platform, Siemens Simcenter STAR-CCM+ runs on GPUs to deliver performance where a single GPU node can replace over 50 CPU-only nodes. For example, in turbine blade LES (Large Eddy Simulation) workflows, GPU acceleration reduced simulation times by over 77% compared to CPU clusters.

- Enhanced Design Iterations (Shift Left): The immense speed allows engineers to ”shift left”—performing high-fidelity, complex simulations early in the design phase, allowing them to test hundreds of aerodynamic configurations.

- Lowered Costs and Energy Consumption: GPU-based simulations offer a lower total cost of ownership (TCO) and reduce energy consumption significantly—sometimes to 35% of the power needed for equivalent CPU runs. Siemens and NVIDIA demonstrated that GPU usage could reduce required hardware investment by up to 40%.

- High-Fidelity Modeling of Complex Flows: GPU power enables simulation of complex aerodynamics, such as rotating rims or under-hood flows in cars, with higher fidelity (e.g., LES instead of RANS).

- Real-Time Visualization and Digital Twins: The partnership integrates NVIDIA Omniverse with Siemens’ Simcenter, allowing for photorealistic, real-time visualization of aerodynamic data, making it easier for engineering teams to analyze design flow qualities, such as drag and lift.

- Generative Simulation and AI Integration: Future-focused efforts include training AI models on NVIDIA infrastructure to suggest optimal aerodynamic shapes before a single full CFD simulation is run, speeding up development from years to months.

- Under 2026, the partnership between Siemens and NVIDIA expanded to use the latter’s Blackwell GPUs, aiming for even faster performance and deeper AI integration into the Siemens Xcelerator platform.

Why GPUs are Essential for AI

GPUs are of high importance in AI solutions because their architecture is designed for parallel processing, allowing them to perform thousands of small, simultaneous computations. This makes them ideal for the massive matrix multiplications and linear algebra foundational to deep learning and neural network training, which would take much longer on traditional CPUs.

- Massive Parallelism: Unlike CPUs, which are designed for sequential processing (handling tasks one by one), GPUs contain thousands of smaller, specialized cores that work together to slice through complex data-intensive tasks.

- Accelerated Training Time: Training complex AI models requires immense computational power. GPUs can reduce training times from days or weeks to hours, enabling faster experimentation, model refinement, and accelerated time-to-market.

- Real-time Inference: Once a model is trained, it must make predictions on new data (inference). GPUs provide the low-latency, high-throughput capability needed for real-time applications such as self-driving cars, chatbots, and facial recognition.

- Handling Large Datasets: Modern AI, specifically Generative AI and Large Language Models (LLMs) like GPT-4, involves trillions of parameters. GPUs provide the high memory bandwidth (e.g., HBM3e) and memory capacity needed to process these massive datasets efficiently.

- Specialized AI Hardware (Tensor Cores): Modern GPUs (e.g., NVIDIA H100/H200) include specialized hardware known as Tensor Cores, which are purpose-built to accelerate the matrix mathematics essential for AI, increasing performance by orders of magnitude compared to older or general-purpose hardware.

- Broad Software Ecosystem: Software ecosystems like NVIDIA’s CUDA and cuDNN libraries allow developers to easily leverage the raw power of GPUs, creating a standard platform for AI development.

While CPUs (Central Processing Units) are ”general-purpose” managers, they excel at sequential logic. AI is less about complex logic and more about doing the same simple math billions of times in parallel. A GPU behaves more like a ”multi-tasking maestro,” enabling 10x–100x faster training than CPU-only systems.

The GPU Technology is Essential

The main reasons behind this GPU revolution are several:

• One important one is that GPUs have thousands of smaller specialized cores, unlike CPU processors, which have a few powerful cores for sequential tasks. These smaller GPU cores excel at SIMD (Single Instruction, Multiple Data) execution, which allows them to simultaneously calculate particle physics, mesh elements or pixel changes.

• Furthermore, GPU acceleration – with speed increases of 10x to 100x+ – significantly reduces simulation time. For example, in fluid dynamics (CFD), a single GPU can perform at the level of over 400 CPU cores. In specific scenarios, GPU-accelerated simulations have shown over 700x performance gains compared to 4-core CPU simulations.

• A key benefit of GPUs is that they enable real-time and complex simulations: The speed of GPUs enables interactive real-time simulation, changing the workflow from a “simulate-overnight” approach to a “design-and-analyze-on-the-fly”. It also enables the use of more realistic, non-simplified models.

• Unified simulation and AI pipelines: Modern GPU platforms (such as NVIDIA CUDA-powered systems) allow researchers to run physically based simulations and AI (machine learning) model training within a unified environment, minimizing data transfer costs.

• Another important benefit is high memory bandwidth (VRAM). GPUs use high-bandwidth memory (VRAM) that enables rapid retrieval of large amounts of data and bypasses the bottlenecks typical of CPU main memory. This high-speed memory is essential for handling high-resolution, complex models.

• In this context, it is common to talk about the value of democratizing high-performance computing (HPC). GPUs are more cost-effective and energy-efficient than traditional CPU clusters. A single GPU server can deliver comparable performance to a massive CPU cluster at a fraction of the hardware purchase and energy cost.

Key application areas transformed

Computational fluid dynamics (CFD): Modeling complex flow patterns, aerodynamics and hydrodynamics, heat transfer, and electromagnetism.

Autonomous vehicles (AV): High-quality sensor simulation and real-time decision-making for self-driving cars.

Molecular dynamics: Simulation of thousands of atoms for drug development.

Structural mechanics/FEA: Complex structural analysis with refined meshes.

Finally, did you know that STAR-CCM is an abbrevation for, ”Simulation of Turbulent flow in Arbitrary Regions – Computational Continuum Mechanics”.